Google’s Performance Max adds generative AI for ads: the floodgates are opening for all ad platforms

It seems like just yesterday that one of the core challenges of high-volume growth teams was scaling ad creative. Making the thousands of pieces of art needed for testing was hard, even with some rudimentary automation. But yesterday, Google announced that P-Max is adding generative AI for ads in beta, and it has some super-cool capabilities.

Over the next few months the floodgates will be opening for adtech platforms to make generative AI for ads a common core feature for all their advertisers. And while right now many of the platforms are sandboxing generative AI created images in creative suites that help marketers make the images they want, soon that will transition to on-the-fly creation of images and content personalized to individual people.

In that, the big platforms will have huge advantages.

Google’s Performance Max and generative AI for ads

P-Max’s generative AI is both impressive and limited.

It’ll take your brand ambassador and drop her into a corporate, home, beach, or country setting. It’ll generate headlines and descriptions, and let you create core assets to use in multiple ad types and backgrounds. If you already have product assets, P-Max will import them and allow you to try as many variations as you like. Google says it will never generate the exact same image twice, so your competitor won’t have an ad that looks shockingly similar to yours.

(Cue the let-me-try-prove-that-wrong crowd.)

It’s U.S.-only at the moment, and it is rolling out gradually, so not every advertiser will get access this week or even this month. If you’re in a sensitive vertical, such as politics or pharmaceutical, you won’t get access either.

You won’t be able to create images of specific people or celebrities, for obvious reasons, or branded items. (My recent attempt to get OpenAI with Dall-E to make a picture of Oprah failed recently, but Creative Diffusion allowed it.)

And all images will be watermarked with SynthID so there’s a track record and accountability to surface the fact that they are artificially created.

What P-Max’s generative AI for ads solution isn’t is a real-time per-person per-product generative AI solution that combines what it knows about Google users and what it knows about advertisers’ products and offers, and crafts a completely personalized one-off ad in real-time or near real-time to maximize relevance.

Join the party: everyone’s doing it

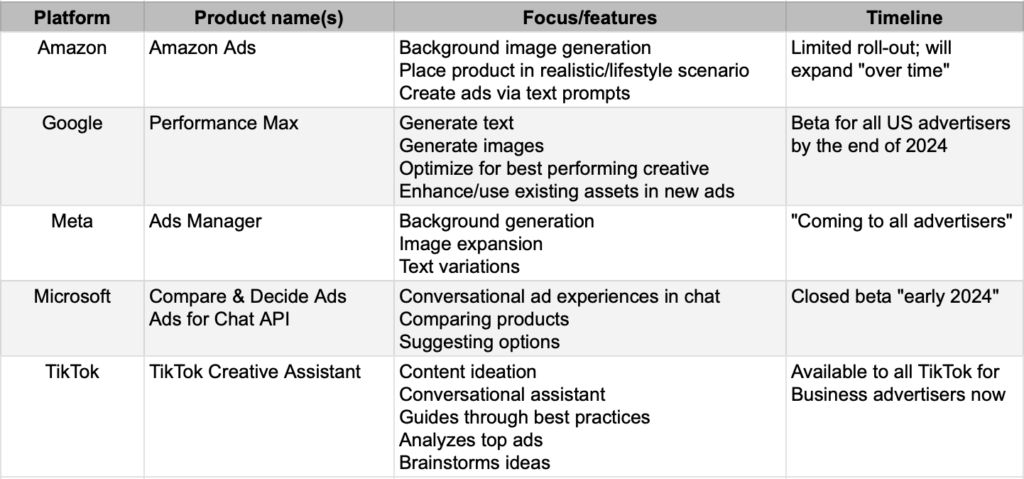

Everyone is joining the generative AI for ads party. Some are further ahead than others in their ability to integrate generative AI with ad tools.

- Google launched Performance Max generative AI ads; availability starts now

- Meta launched generative AI in Ads Manager: started last month, rolling out globally “by next year” for background generation, image expansion, and text variations

- Amazon launched image generation in beta last month, primarily focused on lifestyle backgrounds for product images

- Microsoft is adding Copilot to the Microsoft Advertising Platform which will generate new images using Microsoft’s image library, as well as offer conversational chat … debuting in closed beta in “early 2024”

- TikTok just launched a generative AI “creative assistant” to “to spark creativity and be a launchpad for curiosity” as you make ads for the platform

- Snap is running text ads in My AI, but not generative AI in ads yet

- Pinterest hasn’t announced anything yet

- Reddit hasn’t announced anything yet

- Big brands are using Dall-E and other tools to make their own generative AIs

- Agencies like WPP, Publicis, Omnicom are doing the same

Ultimately, this will be a standard feature and a checkbox item on all major platforms, and from most significant ad agencies and ad networks.

The big platform advantage for on-the-fly generative AI, and what’s next

While all advertising platforms will likely add generative AI for ads tools, add-ons, or plugins eventually, the biggest platforms have huge advantages. Not only can they throw more engineers and more money in solving the challenges of bringing generative AI to their advertising tools quicker, they have an on-platform data advantage.

That means that when it comes to on-the-fly generative AI creation, they can do it with much greater knowledge of their users’ interests, habits, and behaviors, giving them a much greater chance of crafting a message that resonates.

There’s still a lot of work to be done, of course, as the platforms themselves acknowledge. One big area of improvement: allowing marketers to define brand colors, imagery, styles, even conversational tones so that the ads they generate fit the brand and build the brand.

“There is still work to do on delivering outputs customized to every brands’ unique voice and visual style,” Meta says. “We’ll need to define new ways of partnering with brands and agencies to help train these models on brands’ unique perspective.”

The other is doing this all in a way that is cost-effective on GPU time. Especially for on-the-fly generative AI, the GPU load is going to be intense.

Snap is already thinking about that, and has plans to do the work on-device. I’m not sure that will work in all cases, but with the kinds of chips that Apple is putting in its devices these days — and the new Snapdragon Gen 3 chip on Android — that’s like to be a possibility at some point.

Stay up to date on the latest happenings in digital marketing