Stay up to date on the latest happenings in digital marketing

Summary

-

Adopt Unified Measurement Frameworks: Transition from deterministic attribution to a blended measurement system that integrates platform-reported and first-party data. Utilize incrementality testing to enhance budget allocation and ensure performance is assessed holistically, not in silos.

-

Enhance Creative Velocity for Performance: Prioritize rapid creative experimentation by testing new concepts weekly and aligning user acquisition (UA) with app store optimization (ASO) strategies. Treat creative development as a critical performance engine that directly influences campaign effectiveness and return on ad spend (ROAS).

-

Leverage AI for Operational Efficiency: Implement AI tools to automate reporting, surface performance anomalies, and generate creative variations swiftly. This will facilitate faster decision-making and enhance the overall agility of marketing operations, ensuring a competitive edge in resource allocation and campaign adjustments.

The rules of growth have changed again.

Modeled attribution is standard. Privacy updates continue to reshape signal availability. Creative fatigue hits faster. And AI in app marketing has moved from experiment to infrastructure.

In our recent webinar, growth leaders aligned around one central idea: the 2026 app growth strategy is not about chasing cheaper installs. It is about building a resilient system that blends measurement, creative velocity, AI-powered analysis, and disciplined experimentation.

Here is what executive teams need to focus on.

Why the 2026 app growth strategy demands a structural shift

The performance playbook that worked five years ago relied on deterministic attribution, a handful of dominant platforms, and reactive reporting cycles.

That model no longer holds.

Recent industry shifts underscore why:

- Apple’s ongoing privacy updates, including changes discussed around iOS 26 and WWDC, continue to reshape signal availability and modeling approaches. See our breakdown of iOS 26 privacy updates from WWDC and WWDC 2025 AdAttributionKit updates.

- SKAN continues to evolve. Understanding postback mechanics and modeling is now table stakes. Read our guide on Apple Ads and SKAN postbacks.

- Google’s recent integrated conversion measurement announcements signal deeper modeling across ecosystems. See our analysis of Google’s integrated conversion measurement update.

- The privacy landscape remains fluid. Our coverage of the RIP Privacy Sandbox moment reinforces how quickly assumptions can change.

The implication is clear: attribution is no longer deterministic. It is blended and modeled.

The modern 2026 app growth strategy must accept that reality and operationalize around it.

Pillar 1: unified, advanced measurement

The webinar reinforced a critical mindset shift: you do not need perfect attribution to scale. You need unified measurement that blends available signals and fills gaps with modeling.

Recent developments support this approach:

- Apple Search Ads and AdAttributionKit integrations are redefining how attribution flows in iOS environments. See our detailed overview of Apple Search Ads, SKAdNetwork, and AdAttributionKit.

- Meta’s Advanced AEM and modeled SAN frameworks require marketers to contextualize platform data. Our blog on Advanced AEM explains what this means for measurement.

- Our deep dive on advanced mobile measurement outlines why incrementality testing and blended ROAS must become standard practice.

- The broader evolution is captured in our analysis of mobile measurement in 2025, which sets the stage for what 2026 demands.

Executives should ask:

- Are we blending platform-reported and first-party data?

- Are incrementality tests built into our planning cycle?

- Do leadership dashboards reflect unified performance, not siloed metrics?

The goal is directional confidence and efficient capital allocation, not theoretical precision.

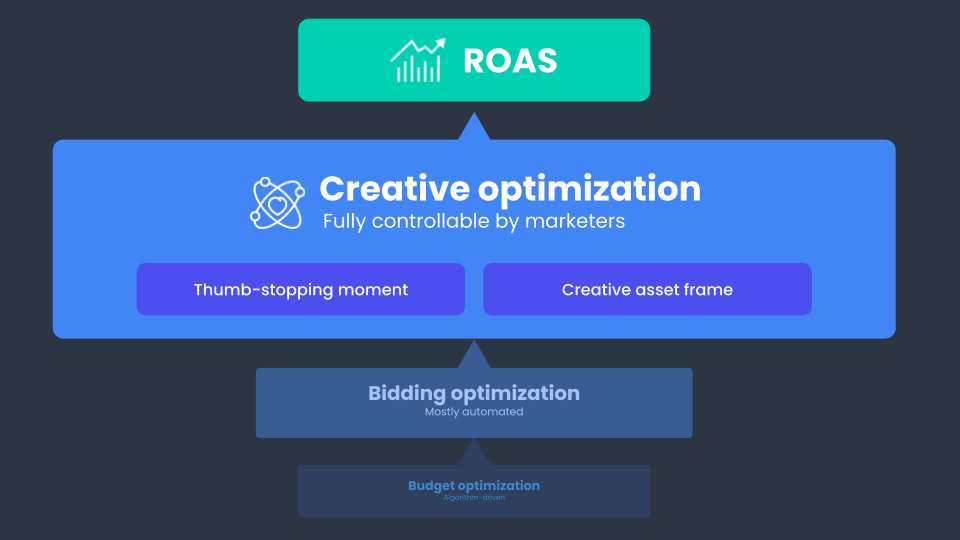

Pillar 2: creative velocity as a performance engine

Across the panel, one theme stood out: creative is the primary lever left to pull.

Targeting is constrained. Algorithms are mature. The variable that remains within your control is creative throughput.

Creative velocity in 2026 means:

- Testing more net-new concepts weekly

- Iterating based on performance signals in days, not weeks

- Aligning paid creative themes with ASO strategy

Creative is no longer a branding afterthought. It is a performance multiplier.

Executive takeaway:

- Fund creative experimentation as infrastructure, not campaign spend.

- Measure asset-level ROAS.

- Align UA and ASO teams to reinforce messaging loops.

The growth loop model discussed in the webinar reinforces this point. Paid performance informs organic optimization. Organic insights inform paid testing. Lifecycle messaging improves LTV before paid budgets expand.

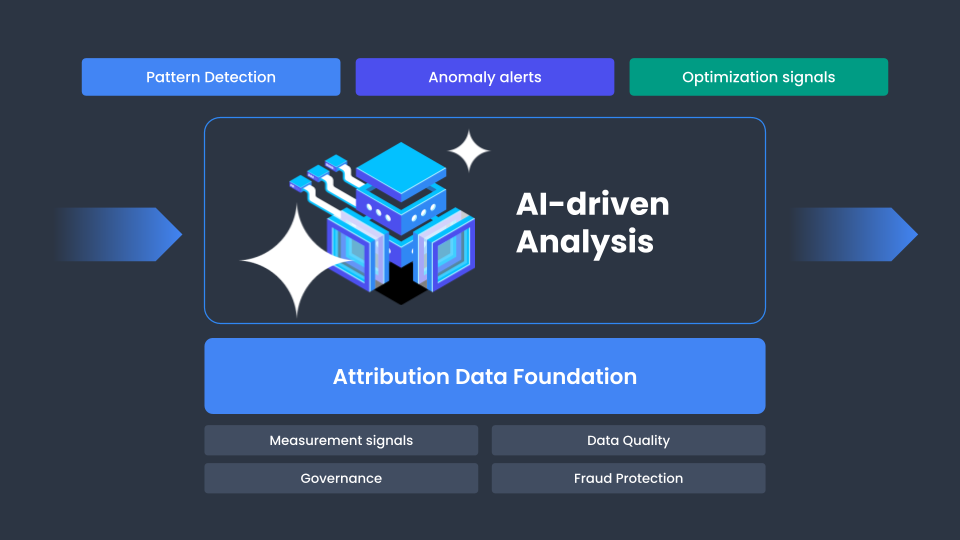

Pillar 3: AI as operational infrastructure

The conversation around AI has matured.

This is no longer about flashy demos. It is about reducing reporting friction and accelerating decision cycles.

AI in app marketing is being used to:

- Surface anomalies across channels faster

- Summarize performance shifts

- Generate creative variations for rapid testing

- Assist growth managers with natural-language data queries

The competitive edge is speed-to-insight.

If your reporting cycle still depends on manual spreadsheets and static dashboards, you are operating at a structural disadvantage.

The business impact:

- Faster budget reallocations

- Reduced analysis lag

- More confident experimentation

AI compresses time between signal and action.

Pillar 4: diversification without fragmentation

Another clear takeaway: channel concentration is risk.

However, diversification must be disciplined.

Teams are testing programmatic DSPs, rewarded placements, and emerging formats. But they are validating incrementality before scaling.

Recent regulatory and ecosystem events, including high-profile developments like France’s fine against Apple, reflect how quickly platform dynamics can shift. See our coverage of France fining Apple.

The lesson is strategic: build optionality.

Your 2026 app growth strategy should:

- Avoid over-concentration in one platform

- Validate new channels with lift testing

- Align diversification with creative testing capacity

Diversification without measurement discipline creates noise. Diversification with incrementality validation builds resilience.

A practical executive scorecard for 2026

Use this as a quick internal audit.

Measurement

- Unified dashboards blending modeled and deterministic data

- Quarterly incrementality testing

- Blended ROAS guiding budget allocation

Creative

- Weekly net-new concept testing

- Asset-level performance tracking

- UA and ASO alignment

AI Integration

- Self-serve data querying

- Automated anomaly alerts

- Reduced reporting cycle time

Diversification

- Budget concentration monitored

- New channels validated through lift testing

- Cross-channel messaging loops implemented

If multiple boxes remain unchecked, there is opportunity to evolve before competitors do.

Common mistakes to avoid

- Waiting for attribution clarity

Measurement will remain modeled. Build confidence through validation, not perfection. - Treating AI as an experiment

AI should streamline workflows, not sit in a sandbox. - Scaling without incrementality validation

Platform-reported metrics alone can mislead. - Underinvesting in creative throughput

Fatigue accelerates. Volume and iteration speed matter.

The bottom line

The 2026 app growth strategy is about system design.

Winning teams will:

- Blend signals instead of debating them

- Treat creative as a performance lever

- Use AI to move faster

- Diversify with discipline

They will focus on resilient LTV-driven growth, not short-term CPI wins.

To go deeper into the frameworks and examples discussed:

Watch the on-demand webinar here

Download the free 2026 Growth Playbook guide featuring expert insights, podcast-style interviews, practical frameworks, and best practices from across the ecosystem.

The path forward is clear. Build a system that adapts. 2026 will reward disciplined operators.