Fake security features in mobile attribution SDKs

I often hear about security questions our customers are asking regarding our mobile attribution SDK security. It usually comes up when companies are evaluating a new attribution provider, and either submit an RFP/RFI document or run their own checklists. What’s interesting is that nine times out of 10, the SDK security questions center around two topics:

- Do you have an open/closed source SDK?

- Do you have an SDK encryption mechanism?

These questions are natural—stakeholders want to make responsible decisions for their business. This is especially true in today’s world where the MMP is the source of truth, one that fraudsters are constantly trying to manipulate.

The problem is: these mechanisms, and some others, are over-hyped by other MMPs and not real security measures. They’re the absolute basics, like remembering to lock the door when you leave the office.

But they don’t offer any real protection. Instead, they provide a false sense of security.

In this article, I’ll explain a bit more about why SDK security is such a difficult problem, why the aforementioned mechanisms aren’t real security, and what Singular’s doing to continue to provide strong protection against fraud.

What’s so hard about securing the SDK?

SDKs are pieces of code that run inside a mobile app. Their main function is to collect and report data like app opens, user events, revenue, and metrics to a server (e.g., Singular’s servers). They also support some functionality like deep linking, fraud prevention, etc.

Since apps communicate with their servers over the internet, there’s an inherent challenge of verifying this communication is indeed originating from a real device and a real user.

As such, two of the most commonly used techniques for securing SDK communication are adding encryption and closing the source. The point is to make it hard to fake authentic communication, but it’s actually security through obscurity—which is a big “no no” in the world of security. As a result, advertisers have a false sense of safety and are easy pickings for fraudsters.

The best analogy is wax seals, used in the Middle Ages, to seal letters and authenticate the sender. Sadly, in today’s age, wax seals aren’t truly effective tools for security. Anyone motivated enough can find a way to produce perfectly similar wax seals, and fool the letter’s recipient into believing it’s an authentic communique.

SDK encryption

A standard play in the obfuscation game involves attempts to use encryption to “verify” that the data being sent by the SDK to the server is indeed authentic data.

Encryption algorithms rely on a secret key established between two parties. In our case that would be the SDK and the server. The encryption algorithm, combined with the secret key, enables you to create authenticated, encrypted messages.

While this sounds like a marvelous idea, there is one small flaw in this plan. The SDK that resides inside the app needs to know the secret itself. Most apps that we know, even the paid ones, are publicly available for download in the App Store / Play Store, which means that anybody can get ahold of the secret key. Not so secret anymore… is it?

The way to extract the key is quite simple:

- Download the app binary (APK for Android, IPA for iPhone)

- Depending on the platform, you may need to decrypt the binary with publicly available tools

- Reverse engineer the binary and get the SDK encryption key

For skilled individuals—certainly ones who are financially motivated (fraudsters)—this can be done in seconds if it’s automated by software, or minutes if done by hand.

Does closed source matter?

Probably the best example of security through obscurity is the claim some vendors make about how their closed-source approach is “essential when fighting ad fraud,” while other vendors claim they “live by open source.”

Sadly, it’s all BS.

Since this is almost a religious matter for some people, I’ll avoid picking sides. Instead, I’ll simply explain why no option really provides security against faking SDK traffic:

- Open source claims that by being open and transparent with your code, it’ll be easier to weed out bugs and to be audited. As such, you’re creating a more secure environment.

The obvious downside is that your entire security mechanism is open for all, and you can see how it works (i.e., you can see how someone generates their wax seal). - Closed source claims that by being closed and obfuscated with your code, it’ll be harder to find bugs and be audited, and as such you’re creating a more secure environment.

While it makes it difficult for people to understand how your security mechanism works, there are processes like reverse engineering that any semi-skilled fraudster could utilize that basically reveal something quite close to the original source code. Which means that if you try hard enough… you can still learn how the security mechanism works!

What you need to understand is that it’s all an obfuscation game, and it’s not real security.

How do we secure our mobile attribution SDKs?

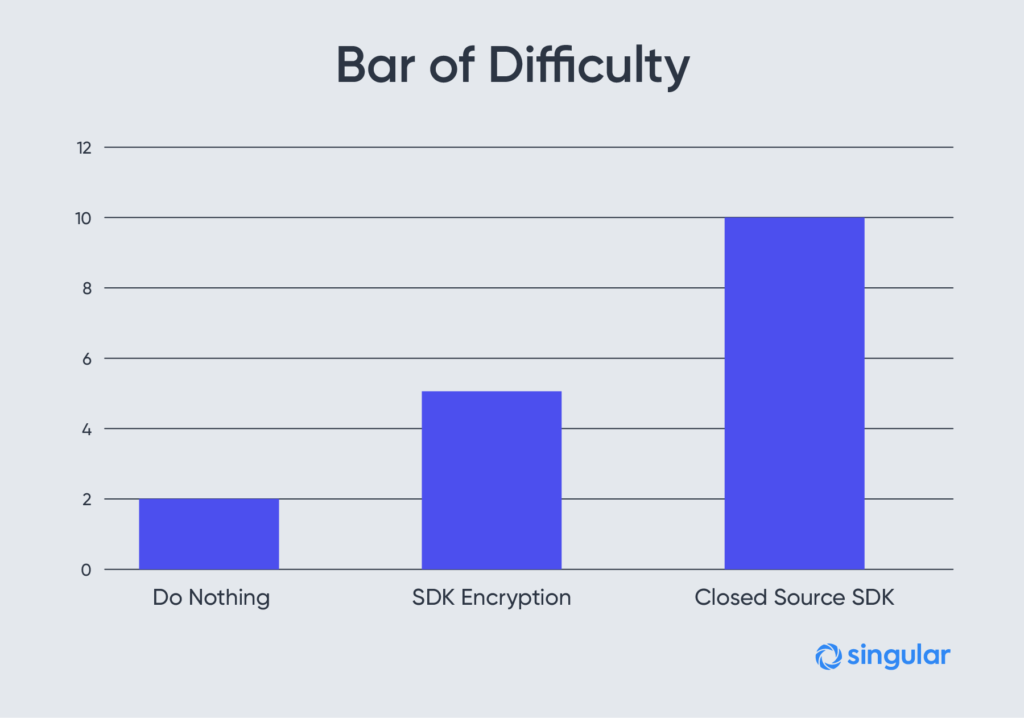

First off, we do the basics. Closed-source SDK and SDK encryption are the basics, and we’ve done them since the first version of our SDK.

Second, we developed proprietary methods for iOS and Android that leverage a chain of trust. This chain helps enforce that devices communicating with our servers are real devices, owned by real people.

As the leader in enterprise fraud prevention, Singular is the only vendor with these capabilities. Using this technology, we’ve saved our customers from wasting hundreds of millions of dollars on fraudulent activities. This is not just us raising the bar, but making it virtually impossible to spoof our traffic.

If you’re unsure about your current security and want to talk to our fraud and security experts, come talk to us: fraud@singular.net.

Stay up to date on the latest happenings in digital marketing